Tuning SQL Server’s

Network Performance

To communicate with a SQL Server database, the widely misunderstood TCP protocol from the TCP/IP suite is used. SQL Server implements its Tabular Data Stream (TDS) protocol at the application layer of TCP/IP’s layered model.

The layers in this model correspond to the numbering of the Open Systems Interconnection (OSI) model, on which TCP/IP is based. Each layer communicates with its matching layer on remote systems through one or more protocols. When tuning SQL Server’s network performance, it is crucial to consider the transport layer, which handles error and flow control for SQL Server.

Identifying Network Performance Bottlenecks

When data hits the network, there are a number of problems that can cause poor performance. With the exception of poorly implemented applications, the transport layer is designed to assist with the following performance issues.

- Packet loss: Occurs due to congestion or an unreliable connection.

- Spurious retransmission: Slow links can lead to packet loss and result in duplicate packet transmission.

- Underutilization: Failing to fully utilize high-bandwidth connections with high latency, like many WAN connections do, causes issues of efficiency, resource allocation, and user experience.

- Poorly Implemented Applications: Inefficient use of TCP/IP (or TDS in the case of SQL Server) can contribute to network performance issues.

Suggestions for tuning and settings:

From a strictly SQL Server perspective there are a few things that can be done to improve network performance. The first tunable item is the size of the SQL Server network packet. Ignore the incorrect advice of making this size the same as your network’s Maximum Transmission Unit (MTU) size, typically just 1500 bytes. As it turns out, the TCP protocol, which SQL Server leverages, allows up to 64KB (with IPv4) chunks to be handed to it and it manages the task of dividing that up into smaller pieces for optimal transmission. In fact, setting the SQL Server packet size to such a small value causes more network overhead because each packet must have its own TDS header and requires SQL Server to do the dividing and re-assembly of the data. As a result, the maximum setting of 32 KB can be the best choice even if your environment only occasionally sends large query results.

Other items you can do to impact performance of SQL Server network performance include:

- Using the very latest SQL driver available. Microsoft has greatly improved the performance of its TDS drivers over time; therefore using the latest is important.

- Avoid using the connection string setting “Multiple Active Result Sets” because it is horribly inefficient. Instead use multiple connections with connection pooling.

- Use asynchronous processing with threads in your applications.

- For connections that manipulate large datasets, request 32k packets.

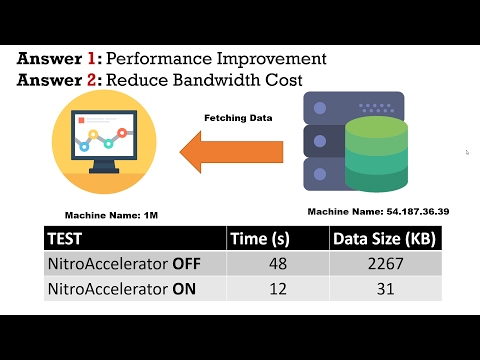

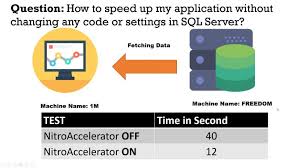

Now, change the equation with NitroAccelerator:

While there are a number of things that can be done to marginally improve network performance, bringing NitroAccelerator into the picture changes the equation completely by:

- Transforming TDS into an optimized stream of variable length packets that makes your WAN operate at LAN speeds.

- Optimizing both the application and transport layers.

- Reducing the number of TCP packets sent by up to 90%.

- Improving latency by up to 90%.

- Multiplying the impact of other performance tuning. – For example, increasing to 32K packet size can improve performance by an additional 400% with NitroAccelerator.

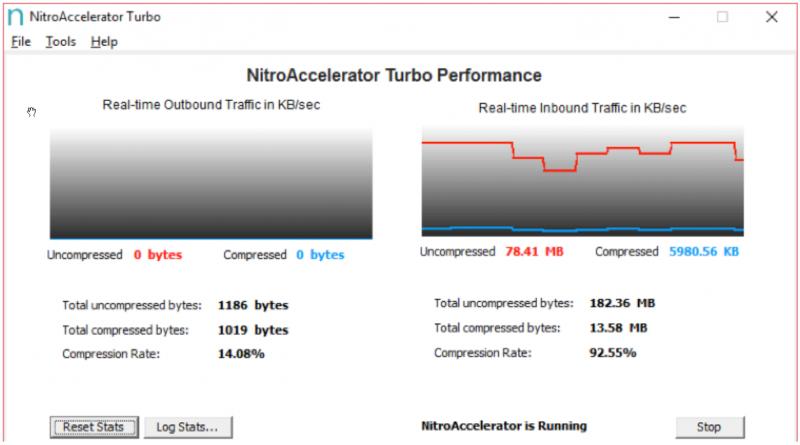

There’s a visible difference on all networks

With NitroAccelerator optimizing your SQL Server traffic, you not only speed up your data intensive SQL Server processes, you also free up space on your network as shown in the three graphs below.

This first chart shows the instantaneous bytes per second transferred for a mix of small and large query results being run without NitroAccelerator:

With the same set of queries, this graph shows the instantaneous bytes per second transferred with NitroAccelerator. Notice that with the exception of a very small peek, NitroAccelerator leaves significantly more headroom on your network for other traffic.

Finally, this chart shows the bandwidth your network would have to be capable of in order to provide the same performance that NitroAccelerator is making possible. The theoretical throughput in this case would be over 1 billion bytes per second over a WIFI connection.